The $50,000 Mistake

We see this request on almost every discovery call: “We want to fine-tune Llama-3 on our internal wiki so it knows our company policies.”

It sounds logical. Humans learn by reading; shouldn’t AI learn by training? No. Using Fine-Tuning to teach an AI “Knowledge” is the single most expensive mistake you can make. You will spend $50,000 on GPU credits, wait weeks for the training run, and end up with a model that confidently hallucinates your HR policy.

The Medical Student Analogy

To understand why, think of an LLM as a Medical Student.

Pre-Training: This is High School. The model learns English, grammar, and basic reasoning.

Fine-Tuning: This is Medical School. The student learns how to be a doctor. They learn the jargon, the bedside manner, and the format of a diagnosis.

RAG (Retrieval): This is the Textbook. When a patient walks in, the doctor looks up the specific dosage for a rare disease.

You do not want a doctor who tries to memorize every drug dosage in the world (Fine-Tuning). They will misremember. You want a doctor who knows how to look it up in the textbook (RAG).

The "Knowledge vs. Behavior" Framework

When should you use which?

Use RAG for Knowledge (Facts):

“What was our revenue in Q3?”

“What is the refund policy for Product X?”

Why: Facts change. You can update a database in 1 second. You can’t retrain a model every day.

Use Fine-Tuning for Behavior (Form):

“Speak in the tone of a playful pirate.”

“Always output the answer in valid JSON format.”

“Translate English to SQL code.”

Why: The facts might change, but the format is constant.

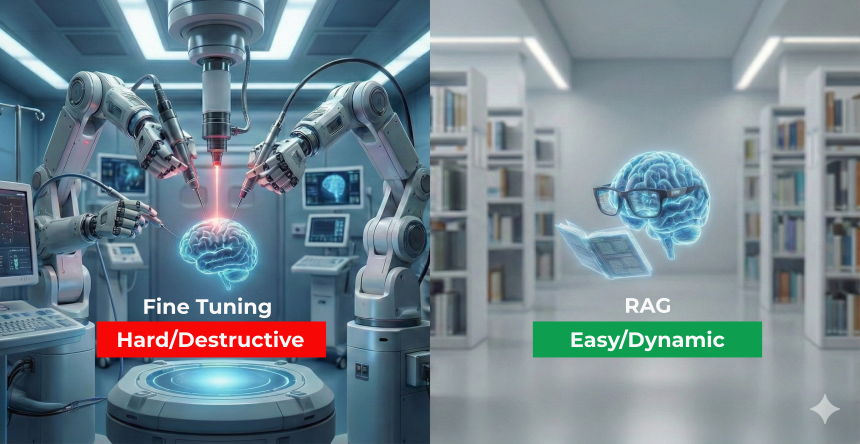

Are you building the wrong architecture? Find out if you need a Vector Database or a GPU Cluster.

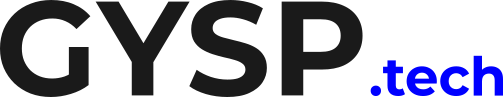

Fine-Tuning teaches Manners.🎩 RAG teaches Facts.📚 If you want your AI to speak French, Fine-Tune it. If you want it to know your Q3 Revenue, use RAG. Read the full Guid + Take a Quiz. #AIEngineering #LLM #DataScience #RAG

The Hybrid Approach

The most advanced teams do both. They use Fine-Tuning to teach the model to use their internal tools (Behavior). They use RAG to feed the model the latest customer data (Knowledge). But if you have to pick one to start? Start with RAG. It’s cheaper, faster, and smarter.

Conclusion: Don’t Lobotomize Your Model Fine-Tuning is destructive. It can make the model forget its original general knowledge (Catastrophic Forgetting). Don’t perform brain surgery when all you needed was a pair of reading glasses.

Stop Guessing. Start Architecting. Should you spend $50k on training or $500 on vector search? Don’t burn your budget on the wrong choice.

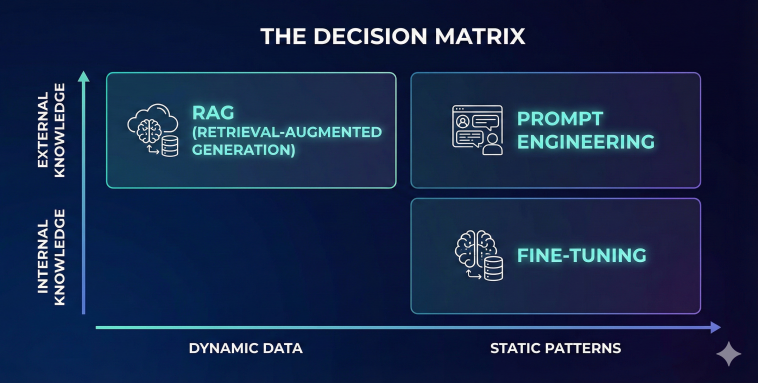

Understanding the difference between “Knowledge” and “Behavior” is step one. Step two is applying a rigorous decision matrix to every new AI project to prevent over-engineering.

We use a proprietary AI Architecture Framework at GYSP to help enterprises choose the right tool—Vector DB vs. GPU Cluster—saving them thousands in wasted compute.

Stop guessing about your model strategy. Use the exact diagnostic tool we use with our enterprise clients.

Take the RAG vs. Fine-Tuning Assessment Below 👇