The "Hello World" of AI

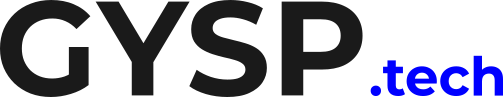

Building a “Chat with your PDF” app is the “Hello World” of 2025. You can do it in 15 minutes with a YouTube tutorial. But there is a massive gap between a 15-minute prototype and a Enterprise System.

We see it constantly: A client builds a bot. It looks great in the demo. Then they ask: “What is the price of SKU-12345?” And the bot replies: “I don’t have that information,” even though it’s right there in the document.

The problem isn’t the LLM. The problem is your Retrieval Strategy. You are relying on “Naive RAG.”

Why Vector Search Isn't Magic

Naive RAG relies 100% on Vector Search (Semantic Similarity). Vectors are amazing at concepts.

Query: “Apple” -> Match: “Fruit,” “Red,” “Pie.”

Query: “ISO-9001” -> Match: … ?

Vectors are terrible at Exact Matches (Acronyms, IDs, Dates, Names). If your user searches for a specific error code “ERR-505,” a vector search might return “General System Errors” instead of the specific page for 505.

The Fix = Hybrid Search

To fix this, you need Hybrid Search. You run two searches in parallel:

Dense Retrieval (Vectors): Finds the meaning (Concepts).

Sparse Retrieval (BM25/Keywords): Finds the exact words (IDs, Names).

You combine these results using an algorithm called Reciprocal Rank Fusion (RRF). Suddenly, your bot understands concepts AND specifics.

Is your AI stupid? Stop blaming the model. Blame the retrieval. Audit your RAG pipeline now.

Stop trying to 'Prompt Engineer' your way out of bad retrieval. If the LLM doesn't see the right data, it cannot give the right answer. Accuracy is a retrieval problem, not a generation problem. Read the RAG Strategy + Take the assessment #AI #RAG #LLM #DataEngineering #MachineLearning

The "Second Opinion" (Re-Ranking)

Even with Hybrid Search, you might get 50 documents. Sending all 50 to GPT-4 is expensive and confuses the model (“Lost in the Middle” phenomenon).

You need a Re-Ranker. This is a small, specialized model (Cross-Encoder) that acts like a strict editor. It looks at your top 50 results and scores them: “Is this ACTUALLY relevant to the question?” It throws away the trash and sends only the Top 5 Gold Standard chunks to the LLM.

Conclusion: Accuracy is an Engineering Problem Stop trying to “Prompt Engineer” your way out of bad data retrieval. If the LLM doesn’t see the right data, it can’t give the right answer. Fix the pipeline, and the hallucination fixes itself.

Move Beyond “Naive RAG” Audit your Chunking, Search, and Ranking strategy.

Recognizing that ‘Naive RAG’ is a sunken cost is step one. Step two is identifying exactly where your infrastructure is hemorrhaging capital on hallucinations and inaccurate outputs.

At GYSP, we use our proprietary ‘Verified-Retrieval’ Framework to help enterprises transition from ‘Toy Bots’ to high-integrity Enterprise AI Systems that don’t lie to your customers or your board.

Stop guessing about your AI’s accuracy. Use the exact diagnostic tool we use with our enterprise clients to measure your RAG maturity and identify your blind spots.

Take the RAG Architecture Assessment Below👇