The "Read-Only" Problem

For the last two years, we have been building “Read-Only” AI. We built RAG bots that can read 10,000 PDFs and answer questions perfectly. This is valuable. But it is passive.

If you ask your banking bot: “Transfer $500 to my savings,” it usually replies: “Here is a link to the transfer page. You can do it there.”

This is the friction point. The future isn’t a bot that tells you how to work. It’s a bot that does the work. Welcome to the era of AI Agents.

What makes an Agent? (Tools + Reasoning)

A Chatbot is an LLM connected to a Database. An Agent is an LLM connected to Tools (APIs).

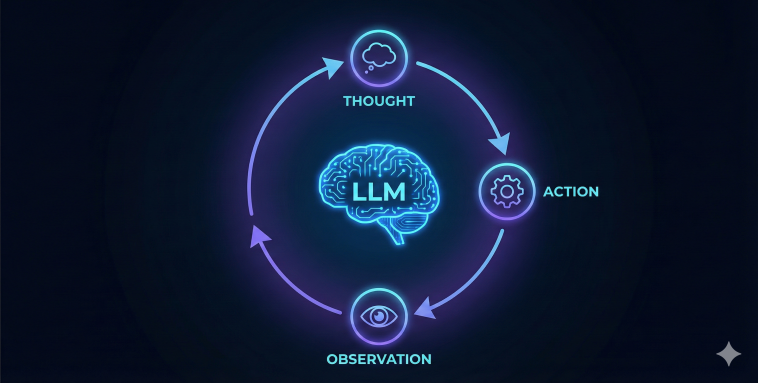

The architecture shifts from a straight line (Input -> Retrieval -> Output) to a Loop. We use a pattern called ReAct (Reason + Act).

Thought: “The user wants to book a flight.”

Action: Call

flight_search_api.Observation: “Found flight BA123.”

Thought: “I need to confirm the price.”

Action: Call

pricing_api.

The AI is no longer just a writer; it is an orchestrator.

The Danger Zone (Infinite Loops)

Agents are powerful, but they are dangerous. If a chatbot hallucinates, it gives you bad text. If an Agent hallucinates, it might refund the wrong customer or delete a production table.

We have seen Agents get stuck in “Infinite ReAct Loops”—trying to solve a problem, failing, retrying, and burning $500 in API credits in 10 minutes.

Is your infrastructure ready for Agents? Agents require different logs, different security, and different guardrails than Chatbots.

A Chatbot that hallucinates gives you bad text. An Agent that hallucinates can delete your database. The barrier to entry for Agents isn't intelligence; it's safety.🛡️ #AIAgents #ReAct #LangChain #ArtificialIntelligence

The Safety Switch (Human-in-the-Loop)

How do you deploy Agents safely? You implement “Human-in-the-Loop” (HITL) Guardrails.

For “Low Stakes” actions (Search, Summarize), the Agent can run autonomously. For “High Stakes” actions (Transfer Money, Delete File), the Agent must pause and request permission.

Agent: “I have prepared the refund for User X. Approve?”

Human: [Click Approve]

Agent: “Action executed.”

Conclusion: The Future is Active The “Chat” interface is just a phase. Soon, AI will be a background service that silently fixes bugs, updates records, and manages schedules. But to get there, you need to architect for Action, not just Retrieval.

Design Your First Agent Don’t let AI run wild. Learn the architecture of safe autonomy.

Understanding that Agents are the future is step one. Step two is ensuring they don’t accidentally delete your database.

We use a proprietary Agent Safety Framework at GYSP to help enterprises design Human-in-the-Loop workflows, API guardrails, and “Kill Switches” for autonomous AI.

Stop fearing the future. Use the exact diagnostic tool we use with our enterprise clients to measure your Agent Readiness.

Take the Agent Readiness Assessment Below👇