The "Empty Sandbox" Problem

You have the budget.

You have the developers.

You have the AI architecture.

But your project is stalled. Why? Because your Chief Information Security Officer (CISO) just said: “You absolutely cannot put real customer emails into this testing environment.”

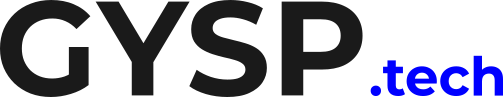

Welcome to the Privacy Wall. Real enterprise data is messy, highly sensitive, and legally protected by GDPR or HIPAA. Scrubbing Personally Identifiable Information (PII) out of 100,000 documents takes months. So, your developers are stuck building a high-tech AI in an empty sandbox.

The Synthetic Solution

If you can’t use real data, you must manufacture it. Synthetic Data is artificial data generated by a large AI model (like Claude 3.5 or GPT-4o) that mimics the statistical properties of your real data, but contains zero real people, real account numbers, or real secrets.

You prompt the large model: “Generate 500 varied customer support transcripts about a lost credit card. Use different tones (angry, confused, polite). Use fictional names and addresses.” Instantly, you have a massive, perfectly labeled dataset.

The "Teacher-Student" Paradigm

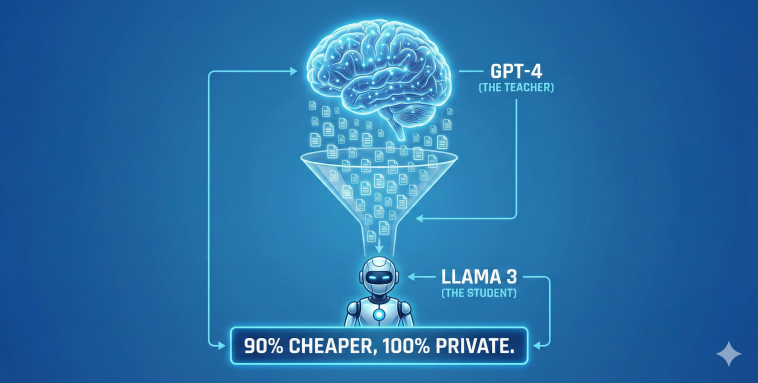

Why generate data if you already have GPT-4? Because GPT-4 is expensive to run in production. The smartest Enterprise architecture in 2026 is the Teacher-Student model:

The Teacher (GPT-4): Generates 10,000 synthetic, high-quality examples of a specific task (e.g., routing support tickets).

The Student (Llama 3 8B): You fine-tune this tiny, open-source model on the Teacher’s synthetic data.

The result? The tiny model learns to perform that one specific task as well as the massive model, but it runs locally in your VPC for 1/10th the cost.

Is data scarcity blocking your AI? Find out if Synthetic Data can solve your privacy and testing bottlenecks.

The "Empty Sandbox" problem is killing AI projects. 🏖️ You have the architecture, but you aren't allowed to touch production data because of PII/GDPR. The fix is Synthetic Data. Read the full Strategy + Get your score🧵#Datascience #AI #ML

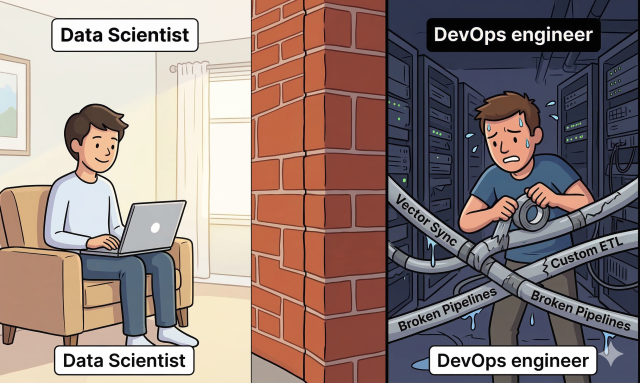

Simulating the "Black Swan"

Real data has a flaw: it is mostly normal.

If you want to test how your AI handles an incredibly rare edge case (a “Black Swan” event)—like a customer requesting a refund in a mix of French and English while citing a discontinued 2018 policy—you might not have a historical record of that.

With Synthetic Data, you don’t have to wait for it to happen. You can explicitly instruct the AI to generate a dataset composed entirely of extreme edge cases.

You can stress-test your system before it ever hits production.

Conclusion: Clean Energy for AI Data used to be the “new oil”—valuable, but hard to extract, dirty, and heavily regulated. Synthetic data is the “new solar power”—infinite, clean, and generated exactly where you need it. Stop fighting InfoSec for access to production databases. Start generating your own.

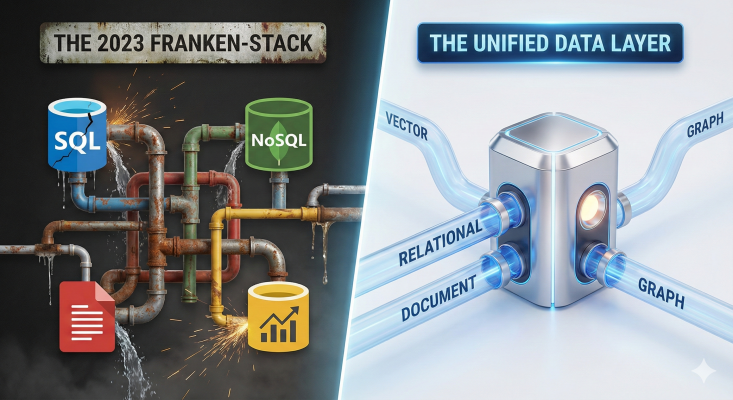

Understanding that Synthetic Data solves the ‘Privacy Wall’ is step one. Step two is actually generating and deploying that data without building massive, brittle ETL pipelines to move it around.

At GYSP, we use our proprietary Unified Data Architecture to help enterprises generate, vectorize, and augment synthetic training data in place (using systems like PostgreSQL with pgvector). This eliminates the nightmare of fragmented data movement, slashes unnecessary third-party integration bills, and keeps your AI training environment inside a 100% secure, governable perimeter.

Stop wrestling with disconnected databases just to feed your models. Use the exact diagnostic tool we use with our enterprise clients to measure your team’s readiness for automated data augmentation.

Take the Synthetic Data Readiness Scorecard Below 👇