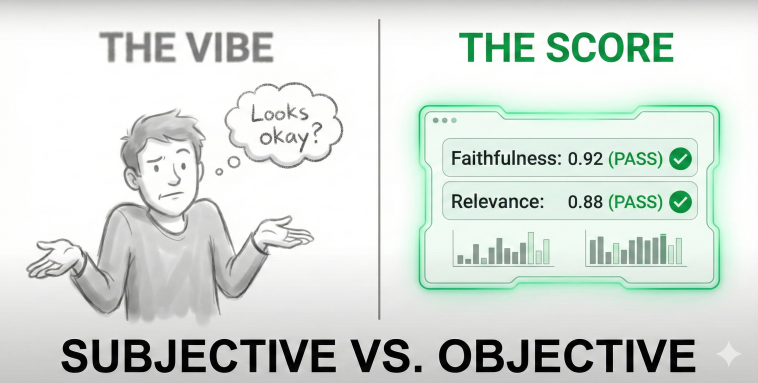

The "Vibe Check" Trap

How do you test traditional software? You write a Unit Test.

Input: 2 + 2Expected Output: 4Pass/Fail: Pass.

How do most teams test Generative AI?

Input: "Write a poem about taxes."Output: [A poem about taxes].Pass/Fail:“Eh, looks pretty good to me.”

We call this “Vibe-Based Development.” It works for prototypes. It is catastrophic for production. If you change a prompt to fix one bug, you often silently break ten other things. Without automated testing, you won’t know until a customer complains.

Defining the "Unit Test" for AI

You cannot write traditional assert equals tests for AI because the output changes every time. Instead, you need Semantic Metrics. For a RAG (Chat with Data) system, you need to measure two things:

Faithfulness: Did the AI hallucinate info not present in the source text?

Answer Relevance: Did the AI actually answer the user’s question?

To measure this, you need a “Golden Dataset”—a list of 50-100 question/answer pairs that represent “Perfect Behavior.”

The Automator (LLM-as-a-Judge)

You can’t hire 100 humans to read every log. You need “LLM-as-a-Judge.”

This is an architecture where you use a highly intelligent model (like GPT-4) to grade the homework of your production model.

Production Model: Generates an answer.

Judge Model: Reads the answer and the source text. It assigns a score (0 to 1) based on strict criteria. “Did the answer contain the price? Yes/No.”

This turns “Vibes” into Numbers.

Is your AI regressing? Do you know if your latest prompt change made the bot dumber?

If you can't measure it, you can't improve it. The difference between a Toy and a AI-Product is a Test Suite. Read the AI Evaluation Guide + Audit your AI QA process. #AIEngineering #LLMOps #DataScience #QualityAssurance #techtips

CI/CD for Prompts

Once you have the metrics, you automate them. Every time a developer opens a Pull Request to change a system prompt, your CI/CD pipeline should run the “Golden Dataset” through the Judge. If the Faithfulness Score drops from 92% to 85%, the build fails. No regression. No surprises.

Conclusion: Maturity is Measurable If you can’t measure it, you can’t improve it. Stop guessing. Start testing. The difference between a Toy and a Product is a Test Suite.

Stop Vibe-Checking Build your Golden Dataset and Eval Pipeline today.

Understanding that “Vibes” are dangerous is step one. Step two is building a “Golden Dataset” that proves your AI works.

We use a proprietary AI Evaluation Framework at GYSP to help enterprises implement “LLM-as-a-Judge,” automate their testing, and stop regression before it hits production.

Stop guessing about your bot’s quality. Use the exact diagnostic tool we use with our enterprise clients to measure your QA maturity.

Take the AI QA Assessment Below 👇