The "Binary Jail"

90% of enterprise value is locked in what we call “Binary Jails.” Scanned PDFs. PowerPoint slides. Complex Excel sheets. To an AI, these aren’t “structured data.” They are a mess of pixels and text.

The standard approach? Download a Python library (LangChain/LlamaIndex), run a “Split by 1000 characters” script, and dump it into a Vector Database. This is why your bot is dumb.

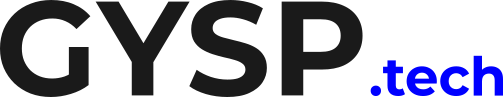

The Table Problem (Naive Chunking)

Imagine a financial report with a table:

Row 1: “Revenue 2023: $1M”

Row 2: “Revenue 2024: $2M”

If your “Chunking Strategy” splits the document right in the middle of the table…

Chunk A: “Revenue 2023: $1M… Revenue 2024:”

Chunk B: “$2M… (Next Section).”

When the user asks “What was the revenue in 2024?”, the AI retrieves Chunk B. It sees “$2M” but has lost the header “Revenue 2024.” It hallucinates.

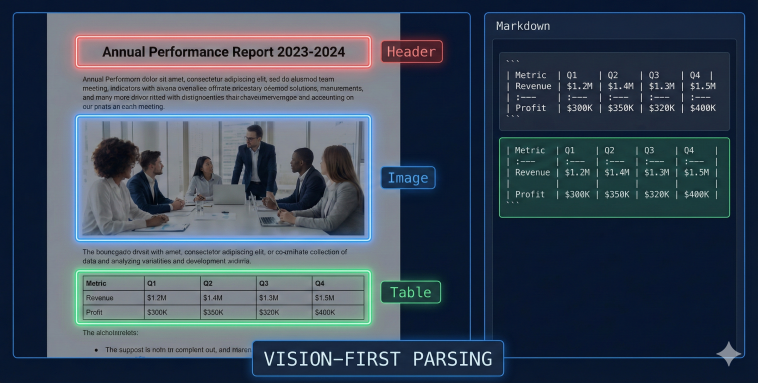

The Fix = Vision-First Ingestion

You cannot treat a PDF as a string of text. You must treat it as an Image. Advanced AI Engineering uses Vision-Language Models (VLMs) or specialized parsers (like Unstructured.io or Azure Document Intelligence) to perform Layout Analysis.

Identify: Detect headers, footers, columns, and tables.

Extract: Convert tables into Markdown or JSON, keeping the headers attached to the data.

Chunk: Split by Section, not by Character.

Is your data garbage? Find out if your ingestion pipeline is destroying your context.

If you split a PDF table in the middle, the AI sees the number but loses the header. It hallucinates. You cannot treat a PDF as a string of text. You must treat it as a visual structure. #DataEngineering #RAG #AI

Semantic Chunking

Once you have clean text, don’t just split by math. Split by Meaning. Semantic Chunking uses an embedding model to measure the “topic similarity” between sentences. If Sentence A and Sentence B are about the same topic, keep them together. If Sentence C starts a new topic, create a new chunk. This ensures the AI always gets a “complete thought” in its context window.

Conclusion: Respect the Source Data Engineering for AI isn’t just moving files from S3 to Pinecone. It is about preserving the meaning of the source material. If you feed your AI broken chunks, don’t be surprised when it gives you broken answers.

Audit Your Pipeline Stop guessing. Start parsing.

Understanding that naive chunking causes hallucinations is step one. Step two is migrating your ingestion pipeline to a Vision-First, layout-aware architecture.

We use a proprietary Unstructured Data Framework at GYSP to help enterprises parse complex PDFs, preserve table structures in Markdown, and implement semantic chunking to protect context.

Stop shredding your documents. Use the exact diagnostic tool we use with our enterprise clients to measure your ingestion maturity.

Take the Unstructured Data Readiness Assessment Below